In high-volume ad platforms, scale rarely feels like a clean victory. As traffic grows, infrastructure spending often accelerates faster than revenue, quietly compressing the margins you worked to build. Many leadership teams accept this as inevitable. But if you want to reduce AdTech infrastructure costs, you have to reject the premise that growth and expense are permanently locked together.

A monthly cloud bill is not just a vendor invoice. It is a report card on engineering efficiency. When costs balloon, it doesn’t automatically signal that you are growing; it often reveals that architectural decisions are simply buckling under load. A high bill isn’t a badge of scale; it is a signal that the system is leaking value.

Vendors don’t impose inefficiency. Architecture does. Whether you are optimizing a real-time bidder or managing custom supply-side platform development, cost acts as a design variable, not a fixed tax. You cannot negotiate your way to profitability; you have to engineer your way there by making efficiency a physical property of the code.

This is often framed as a FinOps problem, but that misses the point. Dashboards and governance tools only describe the spending; they don’t change the physics of why it’s happening. Sustainable cost control requires treating efficiency as a structural constraint, woven directly into how the platform processes data at scale.

Compute Economics: Stateless Systems and Interruptible Capacity

Compute is usually the biggest line item in an AdTech P&L. The instinct is to just buy Reserved Instances to lower the hourly rate. But real efficiency comes from changing what you buy, not just how you pay for it.

A stateless architecture lets you reduce AdTech infrastructure costs by changing your relationship with reliability. You stop paying a premium for uptime guarantees and instead build a system that survives volatility.

The Spot Market Arbitrage

Cloud pricing isn’t static. It’s a market. How stateless architecture reduces cloud costs is simple: it lets you trade in the volatile Spot Market instead of the expensive On-Demand one.

AdTech platforms run 24/7, but cloud providers have massive piles of idle servers that fluctuate by the second. You can buy this capacity for pennies—if your code is brave enough to handle the risk.

- Volatility as Leverage: Use price drops to lower your blended compute cost.

- Market-Based Procurement: Treat servers like a commodity trade, not a fixed subscription.

Why Cloud Providers Sell “Trash” Capacity at 90% Off

Amazon and Google view unused servers as perishable. If a server sits empty for an hour, that money is gone forever. To get something for it, they sell interruptible capacity at massive markdowns.

This isn’t a loyalty discount. It’s a clearance sale. The catch is simple: they can take the server back with a two-minute warning.

- Perishable Asset Theory: Unsold server time has zero value, so providers discount it heavily.

- Clearance Pricing: You get 90% off, but you have to accept the risk of sudden preemption.

- Arbitrage Opportunity: Smart architectures exploit the massive gap between On-Demand and Spot prices.

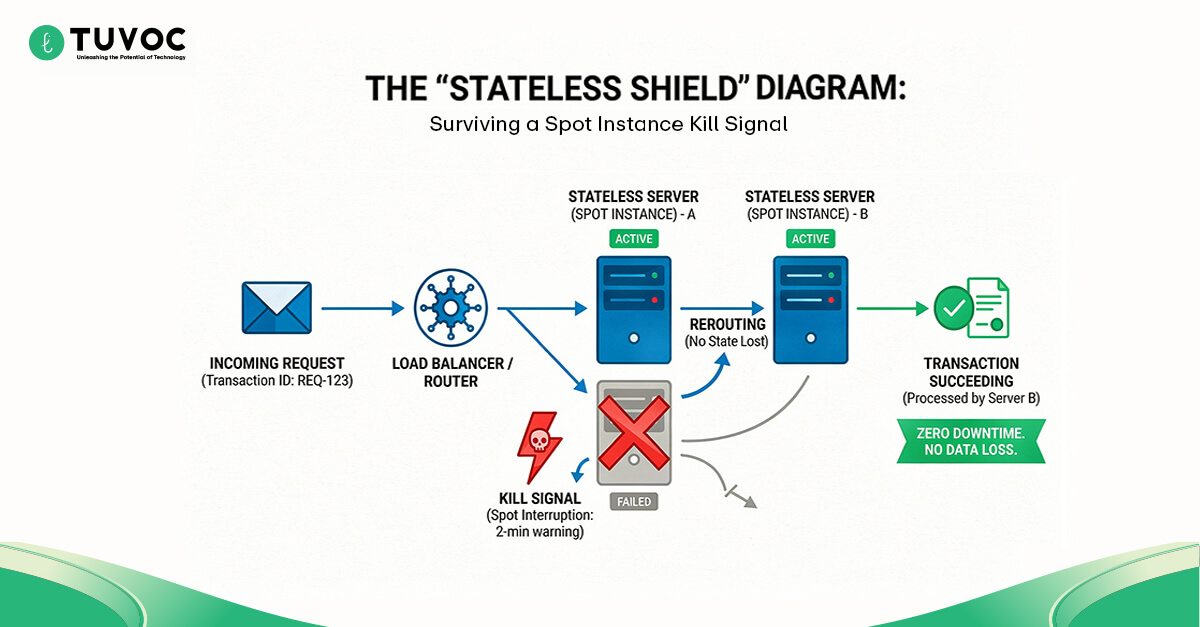

The “Stateless” Shield: Immunizing Revenue from Server Failure

You can’t use cheap, volatile servers if a crash kills a transaction. The solution is adopting stateless architecture patterns. By separating the logic from the user data, you make the individual server disposable.

If a Spot Instance gets reclaimed, the transaction just routes to the next node. The infrastructure becomes fragile so the business can stay robust.

- Decoupled State: Keep user data outside the app server so crashes don’t matter.

- Disposable Infrastructure: Treat servers like temporary tools, not permanent assets.

- Resilient Routing: Automatically move traffic when a node gets the kill signal.

Rightsizing vs. Over-Provisioning: Reducing Waste at Scale

Buying a Spot Instance at a 90% discount is useless if it sits 50% empty. Too many teams pad their specs with “safety buffers,” buying huge CPUs for small jobs. server rightsizing strategies are just the discipline of trimming this fat.

In high-volume systems, over-provisioning isn’t safety—it’s expensive cowardice. Precision sizing ensures you pay only for the compute you actually use.

- Utilization Audits: Check CPU usage to spot where you’re paying for air.

- Precision Provisioning: Match the instance type exactly to the job. No padding.

- Eliminating Safety Buffers: Cut the “just in case” capacity that bloats the bill.

Runtime Efficiency: How Language Choices Shape Infrastructure

Your choice of programming language is a financial constraint. In high-throughput system design, the efficiency of your code dictates the physical volume of hardware you must rent. A compiled language handles more requests per second on the same hardware than an interpreted one.

This isn’t preference; it is math. Compiled languages like Rust or Go execute directly on the hardware. Interpreted languages like Python require extra CPU cycles for real-time translation. At scale, that overhead compounds into a significant monthly expense.

The Hardware-Software Coupling Tax

Software is not abstract. Every line of code requires energy to execute. If your code is inefficient, you are forced to rent more servers to compensate. The AdTech infrastructure total cost of ownership is heavily influenced by this hardware-software relationship.

If your language requires 20% more CPU cycles to parse a bid request, you must provision 20% more cores to handle the same load.

- Execution Overhead: Inefficient code forces you to scale hardware linearly with traffic.

- The Volume Multiplier: Small per-request inefficiencies become massive costs at a billion-request scale.

Interpreted vs. Compiled: The Cost of a CPU Cycle

The debate of Rust vs. Python for high-throughput systems is settled by economics. Python imposes a “translation tax” on every request, requiring the CPU to interpret code into machine logic; Rust does this work upfront.

In a high-frequency environment, switching to a compiled language can drastically reduce server count, turning a scaling problem into a fixed utility cost.

- The Translation Tax: Interpreted languages consume CPU cycles just to understand the code.

- Squeeze the Hardware: Compiled languages simply fit more requests onto the same chip. You buy fewer servers because each one does more work.

- Kill the Spikes: Managed languages pause for garbage collection. In AdTech, those pauses look like timeouts. Rust and Go don’t pause; they just run.

The Translation Tax

| Metric | Interpreted (e.g., Python) | Compiled (e.g., Rust/Go) | The “Tax” |

|---|---|---|---|

| Execution | Real-time translation | Direct machine code | High CPU Overhead |

| Garbage Collection | Unpredictable pauses | Zero / Compile-time | Latency Spikes |

| Concurrency | Limited (Global Interpreter Lock) | Native / High | Lower Throughput |

| Cost Impact | High (More Servers) | Low (Fewer Servers) | 20-50% Premium |

The “Developer Convenience” vs. “Cloud Bill” Trade-off

Optimizing for “fast coding” often means optimizing for “slow execution.” Python is famous for developer speed, but that speed comes with a runtime penalty. In programmatic advertising infrastructure, this trade-off is dangerous.

You are effectively transferring cost from your payroll (developers) to your cloud bill (servers). While this makes sense for a prototype, it is inefficient for a scaled platform.

- Cost Transfer: Moving expense from one-time development to recurring infrastructure rent.

- The Prototype Trap: Building a high-scale system with low-scale tools creates technical debt.

- Operational Drag: Easy-to-write code often creates hard-to-manage infrastructure bills.

Data Lifecycle Management and Storage Economics

AdTech generates petabytes of data, but not all data is created equal. AdTech data storage strategies must strictly differentiate between “Hot” data needed for real-time decisions and “Cold” data needed only for compliance.

The most common architectural mistake is treating all data as “Hot.” Keeping years of logs in expensive, high-performance databases is a fast way to bleed margin. You are paying premium rent for dead assets.

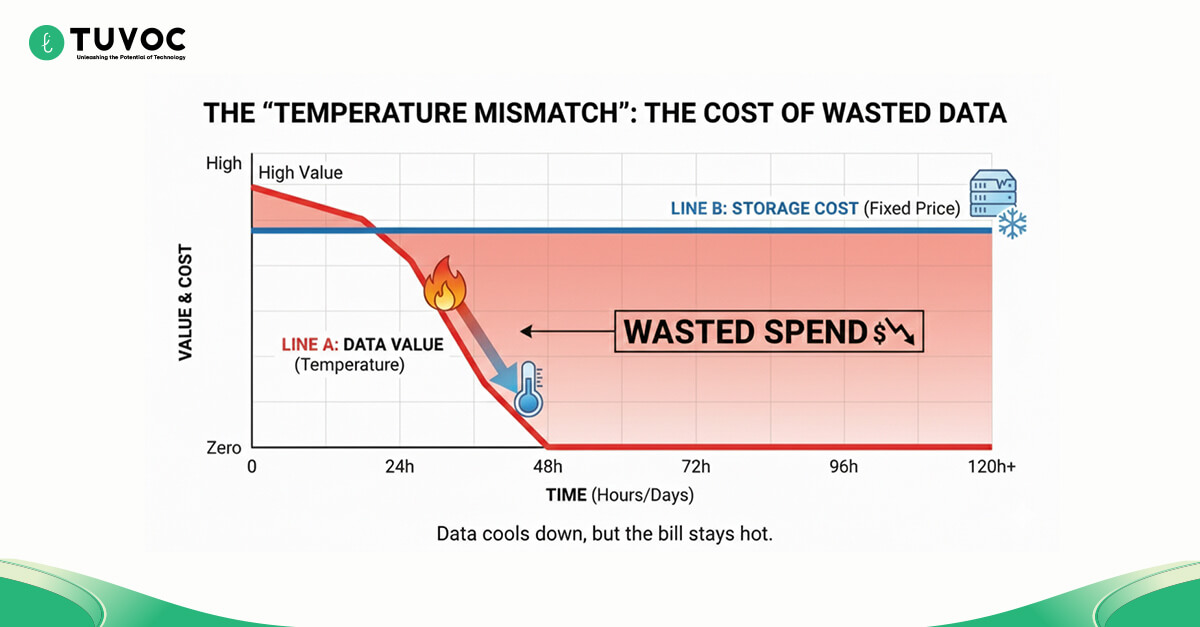

The Cost of Temperature Mismatch

Data value decays over time, but storage costs do not unless you intervene. A bid request log is critical for 24 hours. After 30 days, it is dead weight.

Optimizing storage costs for AdTech log data requires a strict “temperature” policy. You must automate the movement of data from high-cost tiers to low-cost archives the moment its value drops.

- The 48-Hour Cliff: A log file is gold for about two days. After that, its value drops to near zero. Stop paying premium rent for dead data.

- No Humans Allowed: If you rely on engineers to manually archive logs, it won’t happen. The move to cold storage must be a ruthless, automated default.

Hot vs. Cold: The 23x Price Difference

The price gap between storage tiers is extreme. S3 Standard is roughly 23x more expensive than Glacier Deep Archive. Understanding cold storage economics allows you to leverage this massive price arbitrage.

By automating the movement of data from Hot to Cold based on age, you can cut your storage bill by 90% without deleting a single file.

- The 23x Multiple: The price difference between tiers turns classification into high-yield work.

- Classification ROI: Moving data is often more profitable than optimizing code.

- The Storage Fallacy: Storing low-value logs on high-performance disks is financial incompetence.

The “Storage Temperature” Matrix

| Data Age | Business Value | Correct Storage Tier | Relative Cost Factor |

|---|---|---|---|

| 0 – 24 Hours | Critical (Real-time Decisioning) | S3 Standard (Hot) | $$$$$ (100%) |

| 1 – 30 Days | Low (Debugging/Analytics) | S3 Standard-IA (Warm) | $$$ (55%) |

| 30+ Days | Zero (Compliance Only) | Glacier Deep Archive (Cold) | $ (4%) |

| The Mistake | Storing “Cold” logs in “Hot” tiers = 96% Wasted Spend | – | – |

The “Write Once, Read Never” Trap of AdTech Logs

99% of AdTech data is compliance logs. They are written once and never queried again unless an audit occurs. Storing them in a queryable database is financial negligence.

Effective cloud cost management for AdTech dictates that these logs belong in a “digital freezer.” They should be compressed, encrypted, and buried in the cheapest storage class available.

- Hoarding vs. Archiving: There is a massive difference between data you need to query and data you just need to have. Never put “just in case” data on high-speed disks.

- The Digital Freezer: Archive tiers are perfect for data that is strictly for insurance.

- Write Once, Pay Less: Never pay query-level prices for write-only compliance data.

Network Economics: The Cost of Moving Data

AdTech is a high-frequency trading environment where data movement is constant. To reduce AdTech infrastructure costs, you must audit not just your servers but also your topology. Cloud providers charge for data that moves between zones and regions.

In a “chatty” architecture, this movement tax can rival your compute spend. If you treat bandwidth as free, you will be punished by the bill. You have to treat distance as a cost.

The Bandwidth Tax

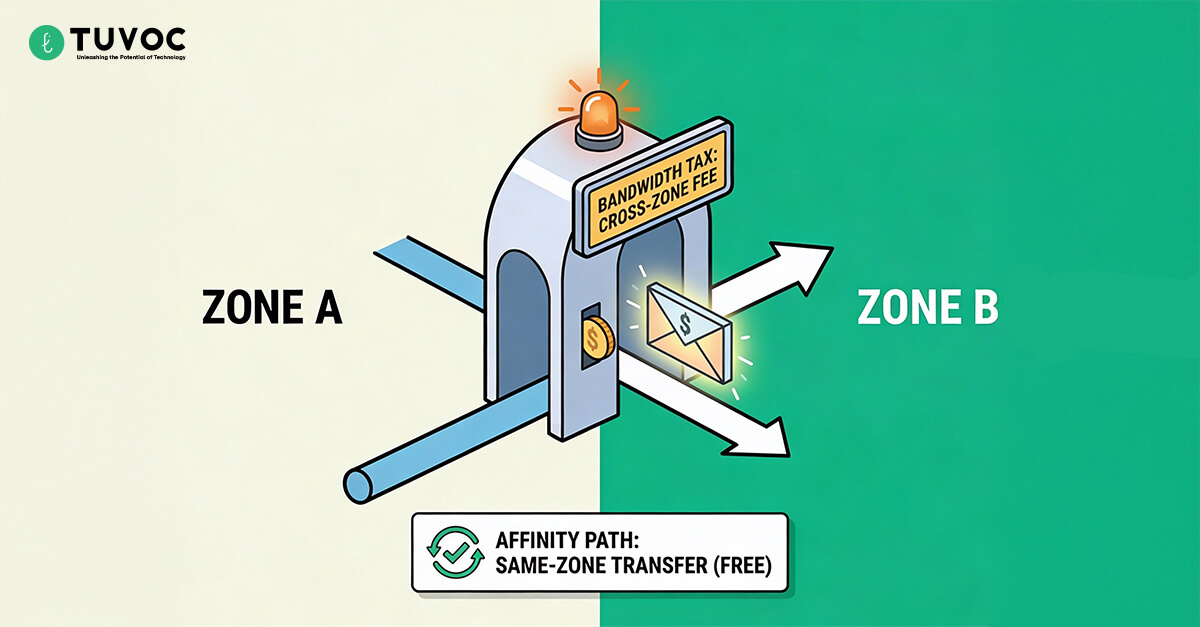

In high-frequency systems, moving data is often more expensive than processing it. The culprit is usually uncontrolled cross-zone traffic. If your Bidder sits in Zone A and your Database sits in Zone B, you pay a toll on every packet.

At AdTech scale, this “Bandwidth Tax” accumulates rapidly. It often appears as a mysterious, unallocated cost on the bill that finance teams can’t explain but engineering teams must fix.

- The Zone Toll: Crossing availability zones triggers data transfer fees that do not exist within a single zone.

- Chatty Protocols: APIs that request too much data too often amplify these transfer costs.

Availability Zone Affinity: Local Conversations are Cheaper

The solution is an architectural discipline known as availability zone affinity. This pattern ensures that a Bidder in Zone A prioritizes talking to a Database replica in Zone A.

By keeping the conversation local to the zone, you eliminate the cross-zone toll entirely. Good architecture respects geography. It keeps the heavy lifting inside the same room.

- Zone Containment: Keeping traffic local eliminates the per-GB transfer fee entirely.

- Respect Geography: Your services need to know where they live. A bidder in Zone A should never accidentally ask a database in Zone B for data it could get locally.

- The “Local” Discount: Staying inside the availability zone isn’t just faster; it’s the only way to make data transfer free.

Private Interconnects vs. Public Internet

Routing internal traffic over the public internet exposes you to variable pricing and security risks. Reducing AWS cross-zone data transfer costs often involves using Private Links or VPC Peering.

This doesn’t just lower the unit cost of bandwidth. It provides a predictable, flat-rate pipe for your heaviest data flows, insulating your P&L from public internet volatility.

- Flat-Rate Pipes: Public internet egress pricing is volatile. Private interconnects lock in a predictable rate for your heaviest flows.

- Dark Traffic: Keep internal chatter off the public web. If it’s not on the internet, it’s much harder to attack.

- Smooth the Stream: The public web is noisy and jittery. Dedicated paths give you the consistent, flat latency that real-time bidding requires.

Efficiency Feedback Loops at Scale

Optimization is nonlinear. AdTech latency optimization isn’t just about speed; it is about compounding value. Every millisecond of latency removed is money saved on the bill and capacity gained on the floor.

When you shave 10 ms off a bid request, you don’t just save time. You free up the CPU to handle the next request sooner. You aren’t just saving money; you are manufacturing capacity without buying new hardware.

Non-Linear Returns on Optimization

Optimization creates capacity out of thin air. In high-throughput systems, Queries Per Second (QPS) is the denominator of your cost structure.

If you can handle more QPS with the same hardware, your unit economics improve geometrically. You delay the need to scale out, effectively getting a server upgrade for free.

- Capacity Generation: Code efficiency delays the need for expensive hardware expansion.

- Geometric Returns: Small gains in speed yield massive improvements in total capacity.

Latency Reduction as Capacity Generation

A faster server finishes tasks sooner, returning to the “Available” pool quicker. Focusing on P99 latency is critical because the slowest requests define your fleet size.

If you cut P99 latency in half, you effectively double the throughput of your existing servers. You can handle 10% more traffic with zero new hardware.

- Quick Release: Faster tasks free up threads for new requests immediately.

- Fleet Compression: Lower latency means fewer servers are needed for the same load.

- Zero-Cost Scale: Handling growth through code efficiency rather than credit cards.

Dynamic Scaling: Paying for Demand, Not Overhead

Static infrastructure is a debt instrument. True AdTech cloud cost optimization requires infrastructure that breathes with the market.

You should never pay for 3 AM server capacity during 3 PM peak hours. Autoscaling rules must be aggressive. Scale up instantly when traffic spikes, and scale down ruthlessly the moment it drops.

- Market Breathing: Infrastructure size should mirror the traffic curve exactly.

- Ruthless Contraction: Shutting down servers the millisecond demand drops saves massive cash.

- Debt Avoidance: Static servers are financial liabilities during off-peak hours.

The “10ms” Rule: How Speed Frees Up Budget

In AdTech, every millisecond saved on processing is a millisecond gained for “Decisioning.” A modern AdTech infrastructure architecture trades speed for intelligence.

Speed buys you the time to run smarter, more profitable algorithms without timing out. You use infrastructure savings to fund revenue generation.

- Time Arbitrage: Saving I/O time to spend on ML processing time.

- Smarter Bids: Faster infrastructure allows for more complex, profitable algorithms.

- Revenue Fuel: Efficiency doesn’t just cut costs; it enables better decisions.

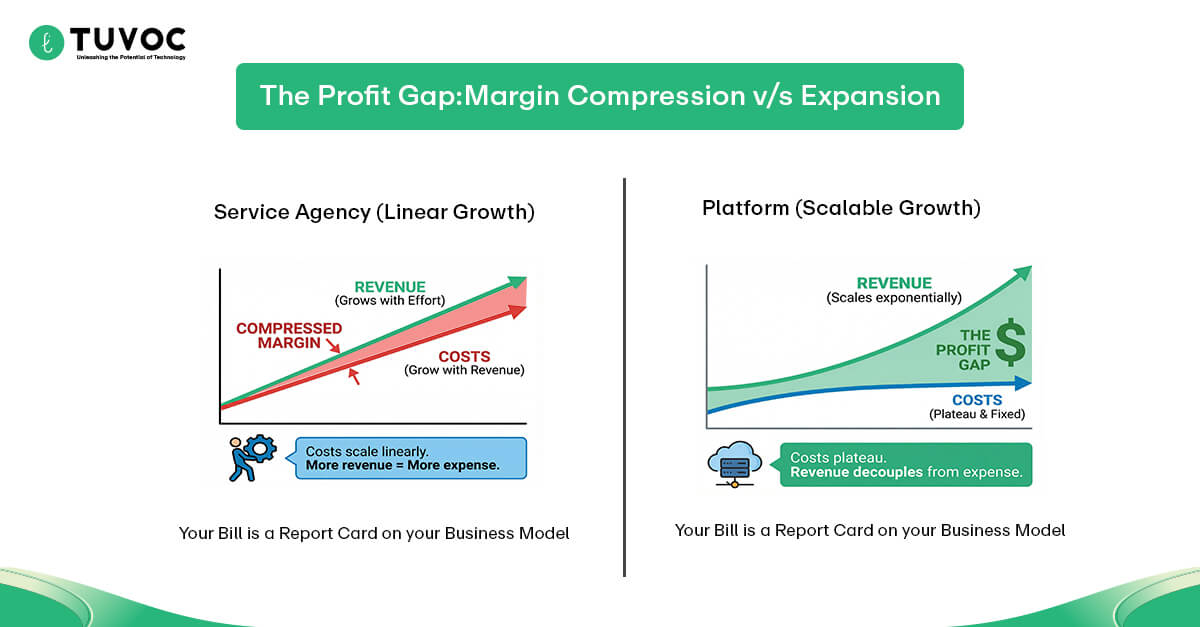

Gross Margin Defense

The goal of every platform is to keep margins high even as traffic explodes. To reduce AdTech infrastructure costs sustainably, you must decouple your cost growth from your revenue growth.

If your cloud bill doubles every time your revenue doubles, you do not own a software platform; you own a low-margin service agency. You have to break the link between traffic volume and invoice size.

Decoupling Revenue from Expense

The defining trait of a software company is that costs do not scale linearly with revenue. Investors look for gross margin expansion, the widening gap between the money you make and the money you spend to make it.

Architectural efficiency is the wedge that drives this gap open. It ensures that every new dollar of revenue comes with a higher profit margin than the last.

- Nonlinear Scaling: Revenue should climb vertically while costs flatten out.

- The Efficiency Wedge: Better code creates the profit room that investors demand.

The Divergence Point: Linear Growth vs. Logarithmic Cost

If your cloud bill grows 1:1 with your revenue (Linear), you are in trouble. True platforms engineer a “profit gap” where revenue climbs while infrastructure costs plateau (Logarithmic).

This shift often requires rethinking OpEx vs CapEx. You invest engineering hours (CapEx) to build custom layers that permanently lower your monthly rental fees (OpEx).

- The Service Trap: Linear cost growth kills your valuation multiple.

- The Profit Wedge: Your goal is simple: revenue goes up and to the right, but costs flatten out. That gap is your margin.

- Build Once, Save Forever: You spend engineering hours (CapEx) today to permanently lower your monthly rental bill (OpEx) tomorrow.

Surviving Low-Margin Environments

Efficient infrastructure allows you to bid on cheap inventory that inefficient competitors can’t touch. This is the core of cloud economics in AdTech: your cost per transaction defines your addressable market.

If it costs you $0.05 to process a bid, you can profitably bid on inventory that pays $0.10. A competitor with a $0.08 processing cost creates a loss. Efficiency lets you enter markets others can’t afford.

The Efficiency Floor

Efficiency is not just about saving money; it is about market access. Your real-time bidding infrastructure establishes an “Efficiency Floor.”

If you lower your processing cost to $0.01, you unlock a massive ocean of lower-cost inventory that is profitable for you but toxic for your competitors.

- Play in the Shallows: Low operating costs let you bid on cheap inventory that your competitors can’t touch without losing money.

- Starve the Competition: When you can profit at $0.05 CPM and they need $0.08 to break even, you effectively lock them out of the market.

Bidding on “Trash” Inventory: Where Inefficient Competitors Die

Low-value inventory (cheap CPMs) is unprofitable for inefficient bidders because their processing cost is higher than the potential profit. Often, the hidden killer is data transfer fees.

Focused cloud egress cost reduction allows you to harvest this massive volume profitably. You scrape pennies from billions of requests while competitors lose money just looking at them.

- Volume Play: Turning low-margin “trash” into high-volume aggregate profit.

- Egress Discipline: Minimizing data transfer fees to keep cheap inventory viable.

- Profit Scavenging: Making money on the inventory everyone else ignores.

Weaponized Thrift: Using Low Cost to Win Price Wars

When you have the lowest cost structure in the market, you can lower your prices to starve competitors without bleeding cash yourself.

How to reduce cloud infrastructure costs in AdTech isn’t just a defensive strategy; it’s a weapon. You can force inefficient players into negative margins and consolidate shares.

- Price War Resilience: You can survive price cuts that bankrupt your rivals.

- Margin Compression: Forcing competitors to operate at a loss to match you.

- Strategic Starvation: Using efficiency to drain the competition’s cash reserves.

Efficiency as a Strategic Constraint

Low cost is a competitive moat. It allows you to outlast competitors in price wars and survive market downturns. Ultimately, the ability to reduce AdTech infrastructure costs is what separates the enduring platforms from the temporary players.

It turns your engineering team from a cost center into a strategic asset. By leveraging superior AdTech development services to build a lean engine, you ensure survival when the market tightens and capital becomes expensive.

Appendix: The AdTech Cost-Efficiency Litmus Test

Are you truly optimized, or just paying for waste?

- Compute: Are you using Spot instances for 80% of stateless workloads?

- Runtime: Does your language choice inflate CPU costs per request?

- Storage: Is cold compliance data sitting in expensive hot storage?

- Network: Are you paying cross-zone tolls for local traffic?

- Scale: Does latency reduction directly lower your server fleet count?

- Margin: Do costs flatline while revenue scales, or do they track?

But cost savings are just the defensive game. A high-performance architecture creates an offensive advantage: Speed. In the next chapter, we explore how millisecond-level latency wins deals before your competitors even place a bid.

FAQs

It isn’t just the sheer number of requests. It is the “weight” of each request. A scale acts like a magnifying glass. If your code is heavy or your data path is sloppy, traffic doesn’t just grow your business it multiplies your inefficiencies until the bill eats the profit.

This happens when your system is just a pass-through. If you don’t engineer leverage like aggressive caching, batching, or compression—you are forced to rent one new server for every new unit of traffic, preventing true margin expansion.

You have to assume the server will die. By decoupling the bidding logic from the user data, you can treat servers as disposable. If one disappears, the load balancer simply retries the request on a neighbor node instantly.

Cloud providers charge a toll every time data crosses the street from “Zone A” to “Zone B.” If your bidder and database are in different zones and talk constantly, that invisible toll eventually costs more than the servers themselves.

A slow server is a busy server. If you optimize your code to finish tasks twice as fast, your existing servers return to the “available” pool sooner. This means you can handle the same traffic with a smaller fleet.

Manoj Donga

Manoj Donga is the MD at Tuvoc Technologies, with 17+ years of experience in the industry. He has strong expertise in the AdTech industry, handling complex client requirements and delivering successful projects across diverse sectors. Manoj specializes in PHP, React, and HTML development, and supports businesses in developing smart digital solutions that scale as business grows.

Have an Idea? Let’s Shape It!

Kickstart your tech journey with a personalized development guide tailored to your goals.

Discover Your Tech Path →Share with your community!

Latest Articles

Is Your Architecture Ready for 10x Growth or Built to Break?

Most engineering teams don’t realize why systems fail under growth, not load, until rising costs and instability make it difficult…

Staff Augmentation Is Broken for AI: What Actually Works in 2026

Why Traditional Staff Augmentation Fails in AI Projects Most teams don’t set out with a flawed approach. In fact, the…

Ad Fraud Prevention Strategy 2026 | Detection, Compliance & Revenue Protection

Why Ad Fraud Prevention Requires a Strategic Shift in 2026 Ad fraud prevention strategy in 2026 isn't a detection problem…