Key Takeaways:

- Identity accuracy drives targeting stability

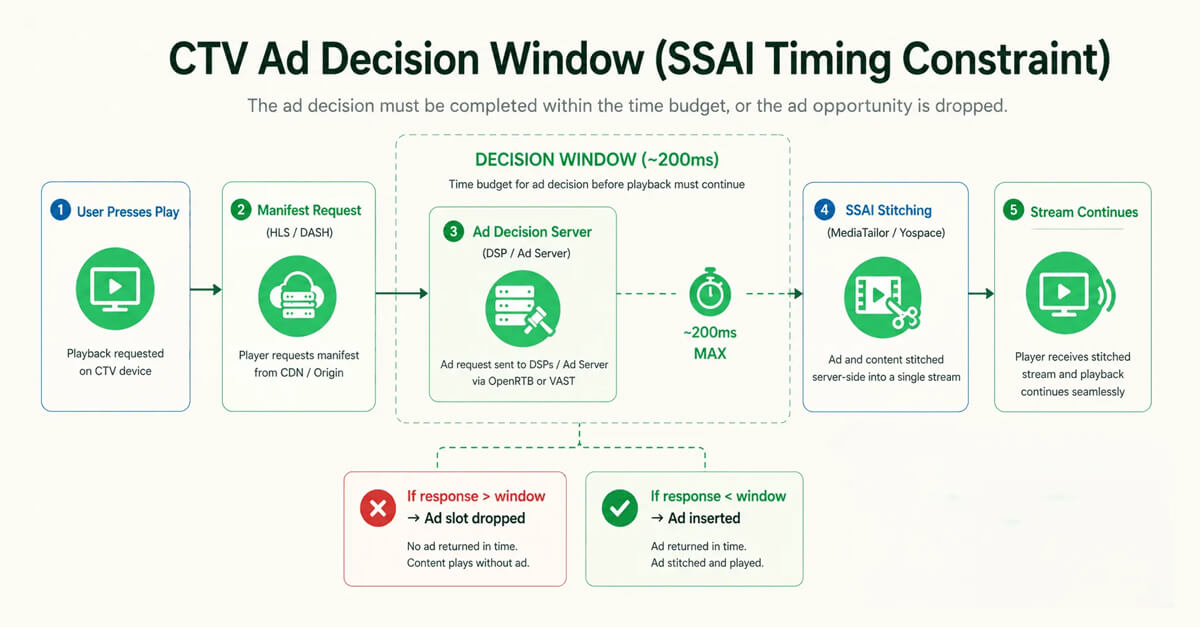

- SSAI introduces timing constraints

- Attribution depends on real-time pipelines

- Ownership improves coordination

CTV AdTech strategy is about how platforms control data, delivery, and decisions to drive performance in streaming environments. AdTech didn’t really “evolve” into CTV. It got pushed. Pages worked fine when everything was click-based. But streams don’t behave like that. Identity, delivery, and measurement all start drifting once video becomes continuous.

This is where AdTech Strategy for CTV stops being an upgrade conversation and turns into a systems problem. Real-time bidding still exists, sure, but real-time bidding platform development now has to deal with timing windows that don’t wait. Miss it, and the ad just doesn’t show.

So platforms aren’t just tools anymore. They’re pipelines. Identity flows into delivery, delivery into attribution. If one breaks, the rest don’t compensate. And that’s why this shift is less about features, more about control. Ownership starts to matter more than capability.

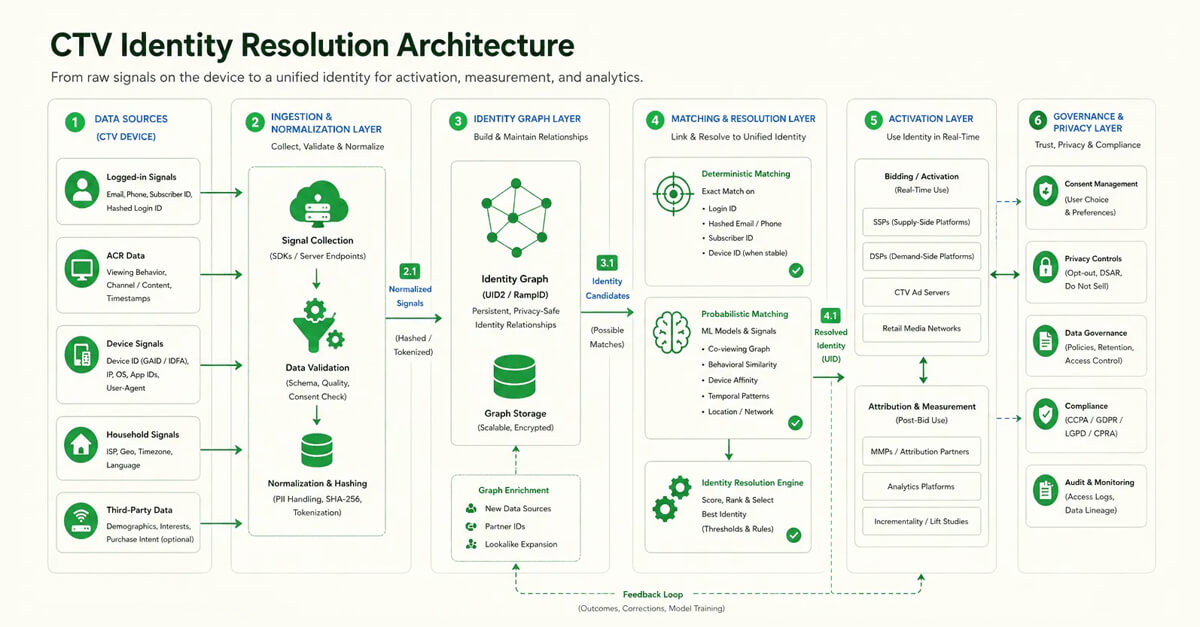

CTV Identity Resolution: Deterministic vs Probabilistic Matching

CTV identity sounds simple until you try to resolve a real user. One screen, multiple people, no clean separation. Household-level targeting works, until it doesn’t.

AdTech Strategy for CTV depends on identity accuracy, but in practice, match rates often sit in the 40–70% range depending on device graphs and login signals. In several implementations, we have seen match rates look stable in reports but break at execution, especially when identity refresh cycles lag behind delivery logs. The rest falls back to probabilistic assumptions, which introduce noise into bidding and frequency control.

Frameworks like UID2 and RampID try to stabilize this, but fragmentation persists. Identity graphs aren’t updated in real time either. Most pipelines refresh in cycles, so decisions are often made on slightly outdated identity states. That’s where drift begins. Small at first, but persistent.

In one implementation, identity accuracy dropped not because IDs were missing, but because refresh cycles lagged behind delivery logs. The system had data, just not at the moment it needed it.

The system tries to decide who is watching. If identity is accurate, ads feel relevant. If not, you may see repeated or mismatched ads across sessions.

The system tries to decide who is watching. If identity is accurate, ads feel relevant. If not, you may see repeated or mismatched ads across sessions.

Deterministic vs Probabilistic Reality

| Dimension | Deterministic Matching | Probabilistic Matching |

|---|---|---|

| Input signals | Login ID, hashed email, subscriber ID | Device graph, behavior, co-viewing patterns |

| Accuracy | High when a signal exists | Variable, depends on model confidence |

| Coverage | Limited to logged-in users | Broader but less precise |

| Stability | Consistent across sessions | Can shift between sessions |

| Failure mode | Missing users | Misidentifying users |

| Impact on bidding | Clean targeting | Noisy decision signals |

| Impact on frequency | Controlled | Over/under exposure risk |

Why Household-Level Identity Breaks Cookie Logic

Household-level identity targeting in CTV assumes one device represents one user. That assumption breaks almost immediately in shared viewing environments. A single screen often represents multiple viewers with different intents, behavior, and purchase signals. Cookie-based logic was built for individual sessions, not shared environments.

In reality, to approximate users, CTV banks on device graphs and IP-level clusters. Then, Unified ID 2.0 or LiveRamp RampID, like identity frameworks, enhances match quality. Nonetheless, dependence on the login signals is prolonged, which usually doesn’t remain consistent across devices.

- Shared device bias: Multiple users collapse into one identity without separation

- Signal dilution: Behavior becomes blended, reducing targeting precision in the long run.

The Cost of Probabilistic Guessing at CTV CPMs

Probabilistic vs. deterministic identity matching becomes expensive in CTV because inventory is priced higher. When targeting relies on inferred signals instead of confirmed identity, errors don’t just affect accuracy; they affect cost efficiency.

At CPMs often ranging between $20–$40, even small mismatches scale quickly across campaigns. Probabilistic systems fill gaps where identity is missing, but they introduce variance in frequency control and audience targeting, leading to overspend without proportional return.

- Cost amplification: Higher CPM magnifies the impact of small identity errors

- Frequency drift: The same user receives repeated ads due to weak matching

When a 10% Error Becomes a 30% Margin Leak

Probabilistic identity matching revenue loss rarely shows as a direct miss. A small mismatch in audience targeting starts compounding across high CPM impressions, especially when the same user is misclassified repeatedly.

At CTV pricing levels, even a 10% targeting error doesn’t stay linear. It spreads across frequency, duplication, and missed conversions, quietly pulling margins down more than expected.

- Compounding effect: Small targeting errors repeat across impressions and inflate costs

- Hidden leakage: Revenue loss appears gradually across frequency and conversion gaps

Why Identity Resolution Breaks at the System Level

Identity resolution infrastructure in CTV is often treated as a vendor capability, but the real limitation sits in how systems are connected. Identity doesn’t behave like a standalone service. It is often connected with data collection, storage, and sync.

Vendors such as LiveRamp or Unified ID 2.0 provide identity layers. Nonetheless, if they are not neatly tied to ad auction and delivery, those IDs don’t get used consistently. The issue usually isn’t whether identity exists. It’s whether the system can actually use it in time.

- Pipeline dependency: Identity accuracy depends on how systems exchange signals

- Sync latency: Delayed updates create a mismatch between identity and behavior

Why shared viewing distorts identity signals in CTV

If we look at the data, the current CTV viewing average stands at 2 hours and 15 minutes per day per eMarketer. Such viewing ensures hundreds of users are interacting with the exact device in a single session.

That overlap makes isolating individual behavior harder when identity relies on device-level grouping.

- Shared sessions: Multiple viewers interact within the same device context

- Identity blending: Signals merge across users, reducing targeting precision

Why CTV Attribution Starts With the Identity Graph, Not the Pixel

CTV identity graph for attribution modeling replaces the role that pixels once played in web environments. Pixels rely on user-level tracking, which is limited in CTV due to device constraints and privacy controls.

Instead, attribution systems depend on identity graphs that connect exposure, session data, and downstream actions. Without that graph, events remain isolated. Even platforms with strong delivery systems struggle to link impressions to outcomes without a consistent identity layer.

- No direct tracking: Pixels cannot reliably track users across CTV environments

- Graph dependency: Attribution requires linking events through shared identity nodes

CTV AdTech Architecture: Why Legacy Systems Fail at Scale

Legacy AdTech systems were built around page-based interactions, where requests are discrete, and timing is flexible. Streaming doesn’t behave that way. Small payloads, predictable timing. CTV doesn’t follow that script. With AdTech architecture for CTV platforms, the mismatch shows up immediately.

A banner request might be a few KB. A video stream segment? Often 1–3 MB, with decisioning windows under 200–300 ms before playback stalls. OpenRTB 2.6 introduces fields like device.devicetype=7, but the underlying pipeline still assumes retry logic and delayed responses.

Streaming doesn’t allow that kind of response. This isn’t underperformance. The protocol assumptions themselves don’t match streaming behavior.

CASE IN PRACTICE

A retail streaming client needed to process large volumes of viewing and interaction data without delays affecting reporting or targeting. Their existing pipeline relied on batch processing, which introduced lag and inconsistencies in attribution.

We recently built a streaming ingestion layer. In the process, we moved away from a queue-heavy ingestion model where requests were processed sequentially under load and shifted to a stateless processing layer that handled events independently.

This removed request blocking under load and kept response times stable during traffic spikes. Our platform scaled to 100K RPS, managing about 2 billion events under 50 ms each. Data was structured directly into PostgreSQL without loss or replay cycles.

The shift wasn’t limited to throughput. Attribution latency dropped from hours to near real-time, and campaign decisions started reflecting actual user behavior instead of delayed snapshots.

Why do video streams break banner-based systems?

Legacy AdTech pipelines were built for banners, where requests are lightweight and predictable. In contrast, the CTV video ad payload vs display ad size comparison reveals how video streams introduce significantly larger payloads and stricter delivery constraints.

A display request may carry minimal data, while a CTV request includes video duration, ad pod structure, and device context under OpenRTB 2.6. This increase in payload size and complexity puts pressure on systems not designed for continuous video delivery.

- Payload imbalance: Video segments far exceed traditional banner request sizes

- Processing overhead: Larger payloads increase latency across delivery pipelines

Why does video metadata become a bottleneck at scale?

Video delivery requires far more contextual data than display advertising. At scale, video ad metadata processing at scale becomes a core constraint, not a secondary concern.

Each request must include stream position, device type, and content attributes. Fields like device.devicetype=7 and content.livestream expand request complexity, while SSAI systems interpret ad pod structures in real time. This increases processing load across high-frequency pipelines.

- Context expansion: More attributes increase decision complexity per request

- Throughput pressure: Metadata parsing slows high-frequency system performance

Why does scaling legacy AdTech systems fail for CTV?

Scaling legacy systems often means adding capacity, not changing architecture. But scaling legacy AdTech systems for CTV workloads exposes timing and coordination limits that infrastructure alone cannot solve.

Under load, queue contention delays decisions beyond usable windows. Retry logic that works on display becomes ineffective in streaming, where missed decisions lead to dropped impressions instead of delayed responses.

- Queue contention: Request spikes delay decisions beyond usable time windows

- Retry limitation: Missed ad calls cannot be recovered during playback

You Can’t Patch Physics

CTV streaming infrastructure limitations show up when systems try to respond after playback has already moved forward. Timing isn’t adjustable here. The stream continues whether the decision is ready or not.

Adding more servers or retries doesn’t fix it. If the response misses the window, the slot is gone. If your system is delayed by 120-150 ms, it causes entire ad pods to ignore insertion windows. Despite this, dashboards show the system as ‘healthy’, but revenue sinks without alerting the system. At scale, these misses don’t look dramatic; they just keep accumulating.

- Timing constraint: Decisions must complete before playback reaches the ad break

- No recovery path: Missed windows cannot be retried during active streaming

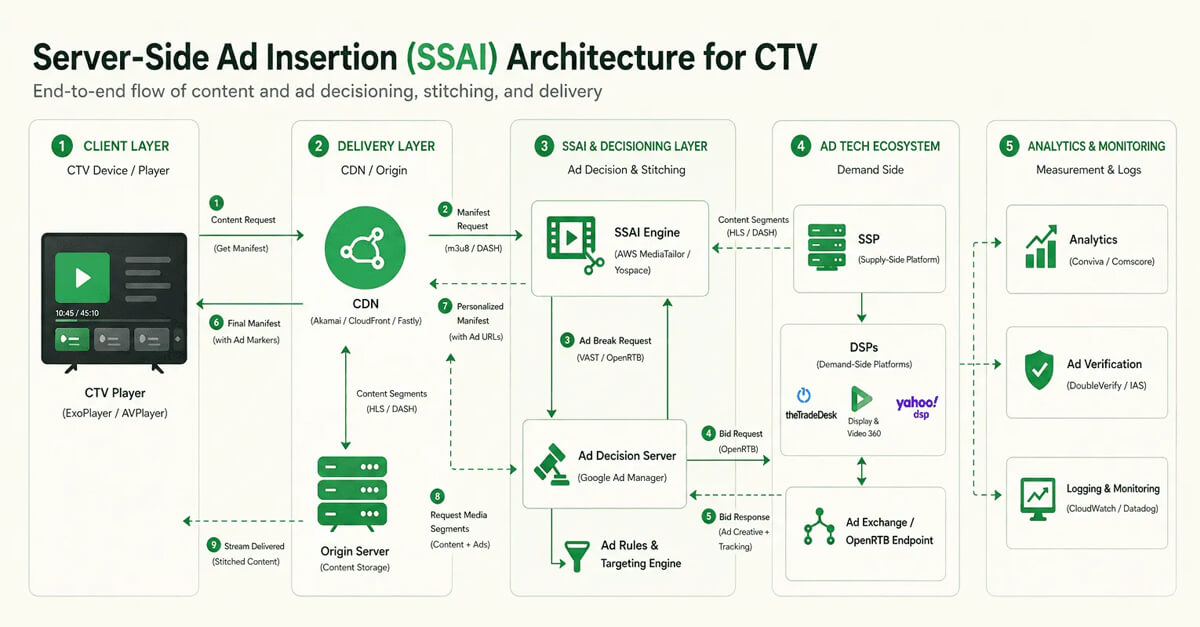

SSAI for CTV Platforms: Architecture, Implementation, and Delivery Control

Client-side insertion works until timing becomes non-negotiable. In CTV, it is. A missed decision window during a mid-roll break doesn’t degrade performance; it drops the ad entirely.

That’s why SSAI implementation for CTV platforms becomes necessary. The player isn’t really in control here. The stream is already prepared, with ads placed directly into the video playlist (HLS .m3u8 or DASH) before playback reaches that point.

Platforms like AWS MediaTailor or Yospace handle this well. Reliable delivery, fewer drops. But you give up some client-side signals and depend more on server logs. Delivery stabilizes, but measurement loses some direct visibility.

In high-scale environments, decisioning pipelines often operate under sub-100 ms constraints, similar to systems handling tens of thousands of requests per second in real-time event processing.

We have, at Tuvoc, seen cases where a 150 ms delay upstream caused entire ad pods to miss insertion. That, effectively, stops monetizing the slots.

JSON

{

“id”: “ctv_auth_2026_001”,

“imp”: [{

“video”: {

“mimes”: [“video/mp4”, “application/x-mpegURL”],

“protocols”: [2, 3, 5, 6], // Supporting HLS/DASH

“w”: 1920, “h”: 1080,

“ext”: {

“poddur”: 120, // Total pod duration: 2 minutes

“podseq”: 1, // The first ad in the break

“slotinpod”: 1,

“minadpodduration”: 15,

“maxadpodduration”: 60

}

}

}],

“device”: {

“devicetype”: 7, // High-signal: Connected TV

“os”: “Roku OS”,

“ext”: {

“atts”: 1, // App Tracking Transparency signal

“ifa”: “38400000-8cf0-11bd-b23e-10b96e40000d” // Deterministic ID

}

},

“regs”: {

“gdpr”: 0,

“ext”: { “gpp”: “DBABMA~BVV…7Y” } // Global Privacy Platform string

}

}Developer Note: Legacy systems often fail to parse the video.ext object in OpenRTB 2.6, causing them to ignore pod duration constraints and break ad sequencing.

Ads are stitched directly into the video stream. You don’t see loading or switching; the ad plays like part of the content itself.

Ads are stitched directly into the video stream. You don’t see loading or switching; the ad plays like part of the content itself.

Why Client-Side Logic Fails at TV Scale

Because the player is incapable of pausing, fetching, or rendering ads, client-side vs. server-side ad insertion in CTV breaks down. What worked for web pages doesn’t translate to streaming conditions. It is because of the narrow time span and visible interruptions.

In reality, the player becomes a bottleneck. Ad requests compete with playback, network variability, and device performance. SSAI systems move this logic upstream, preparing the stream before it reaches the viewer, which avoids missed insertions but shifts visibility to server logs.

- Player bottleneck: Ad loading competes with playback and device performance

- Upstream control: Decisioning shifts to the server before playback reaches the break

Latency Budgets and the Physics of Streaming

Ad insertion latency for CTV streaming is governed by how quickly a decision can be made before playback reaches the next ad break. Unlike display, once the stream starts, there’s almost no room for delay.

Decision windows are tight. In many setups, they sit somewhere in the few-hundred-millisecond range. Platforms like AWS MediaTailor try to prepare decisions ahead of time, but if upstream systems lag, the slot is missed instead of being delayed.

- No buffer window: Playback cannot pause while waiting for ad decisions

- Timing pressure: Late responses result in dropped impressions, not retries

If the system responds in time, you see a targeted ad. If it doesn’t, the content continues without ads. There’s no buffering; just lost an opportunity.

If the system responds in time, you see a targeted ad. If it doesn’t, the content continues without ads. There’s no buffering; just lost an opportunity.

Buffering Is a Revenue Event, Not a UX Issue

Video buffering’s impact on CTV ad revenue isn’t just about experience. When playback stalls near an ad break, insertion timing shifts, and some ads never get delivered.

The loss doesn’t always show clearly. It appears as underfilled pods or missed impressions, especially when buffering overlaps with decision windows during streaming.

- Missed delivery: Buffering disrupts timing, causing ads to be skipped

- Revenue drift: Underfilled pods reduce monetization without obvious signals

Ad Pod Management: Where SSAI Determines Revenue

Ad pod management SSAI becomes a revenue lever because multiple ads must be selected, sequenced, and delivered within a single break. It’s not just about filling a slot; it’s about optimizing the entire pod.

SSAI systems control how pods are structured and filled, including ad duration, order, and frequency in reality. Mismanagement leads to underfilled pods or poorly sequenced ads, both of which directly impact yield and viewer experience.

- Pod sequencing: Multiple ads must align with timing and viewer context

- Revenue impact: Underfilled pods reduce monetization per stream session

Why server-side measurement shifts budget decisions

Server-side measurement frameworks are changing how advertisers react to performance data. IAB/TVTechnology reports that around 75% of advertisers using these approaches report reallocating budgets based on observed outcomes.

This reflects a shift toward signals that can be validated earlier in the pipeline, rather than relying on modeled or delayed attribution.

- Budget reallocation: Spend shifts based on measurable performance signals

- Signal trust: Verified data influences decisions more than modeled outputs

Why Legacy Stacks Treat SSAI as a Plugin?

SSAI integration vs plugin architecture in CTV often fails because it’s treated as an add-on rather than a core system layer. Legacy stacks attempt to plug SSAI into existing pipelines without restructuring how decisions and delivery are coordinated.

This creates mismatches between bidding, identity, and delivery layers. SSAI handles stitching, but upstream systems still operate independently, leading to delays, inconsistent targeting, and reduced control over ad execution.

- Loose coupling: SSAI operates separately from bidding and identity systems

- Coordination gap: Disconnected layers lead to inconsistent ad delivery decisions

Control Planes vs. Delivery Planes

SSAI control plane architecture separates decision logic from stream delivery, but legacy systems often blur this boundary. When both operate together, coordination starts breaking under load.

At scale, delivery continues even when control signals lag behind. That mismatch creates inconsistencies between what was decided and what actually gets inserted into the stream.

- Layer separation: Control and delivery systems operate on different timelines

- Coordination gap: Decisions may not align with actual stream execution timing

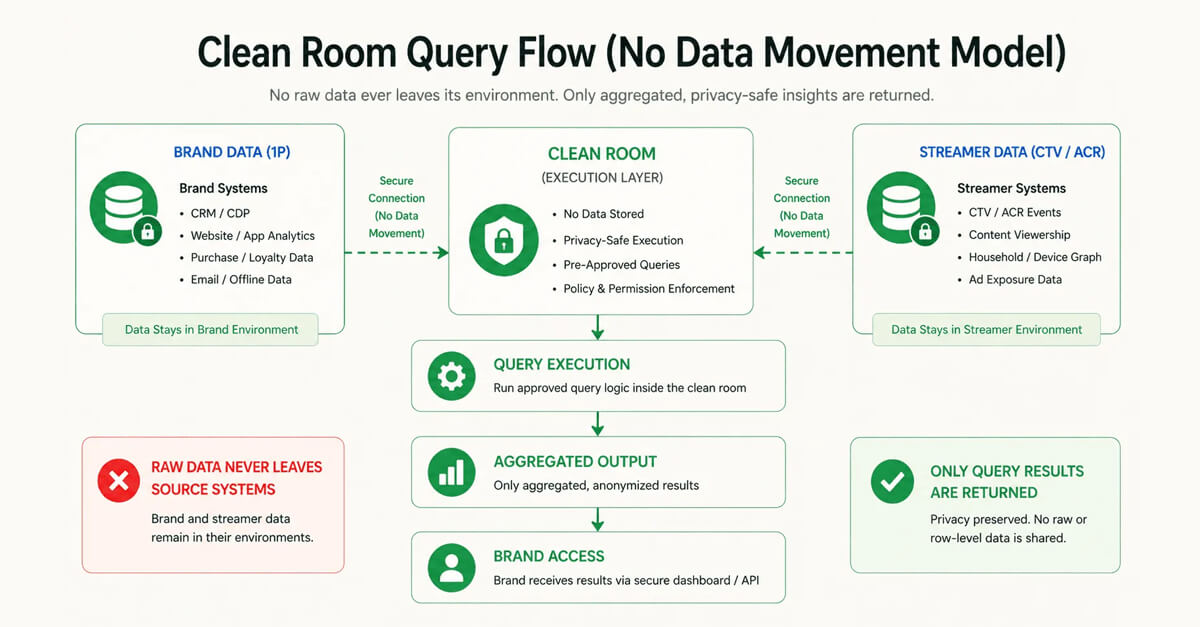

CTV Data Privacy and Clean Room Solutions for Measurement

Data isn’t moving the way it used to. This shift is subtle, but fundamental. You don’t pass it around freely anymore. You query it where it sits.

That’s where data clean room solutions for CTV measurement come in. Not storage layers. Execution layers. InfoSum, Habu, they’re built around controlled joins, not open access.

IAB’s been pushing standards here, but the reality is still fragmented. Every environment has its own rules. So the constraint changes. It’s no longer “who has the data.” It’s “who can actually run something on it.”

In one case, a campaign optimization loop slowed down because query results arrived minutes after delivery events, making real-time adjustments ineffective.

The ads you see are a result of aggregated insights, not individual tracking. Targeting feels relevant, but no single viewing session is directly exposed or shared across platforms.

The ads you see are a result of aggregated insights, not individual tracking. Targeting feels relevant, but no single viewing session is directly exposed or shared across platforms.

Why is data movement no longer a viable growth strategy?

Data movement vs data clean room strategy in advertising is shifting because moving raw user data across systems is becoming restricted by regulation and platform controls. What used to be a scaling advantage, aggregating data centrally, is now limited by privacy constraints and access boundaries.

Platforms increasingly restrict data export while allowing controlled queries. Clean room models, supported by systems like InfoSum, replace movement with computation at the source. This reduces exposure risk but forces teams to rethink how data is accessed and activated.

- Restricted transfer: Raw user data cannot move freely across systems

- Shift to queries: Processing moves to where data is stored

Why are clean rooms execution environments, not storage systems?

Data clean room execution environments for CTV operate less like databases and more like controlled compute layers. They don’t store new data in the traditional sense; they allow queries to run across datasets without exposing underlying records.

Platforms like Habu and InfoSum enable joins between advertiser and publisher data while enforcing privacy rules. This changes how teams think about access. The value is in execution capability, not data accumulation.

- Compute-first design: Queries execute without exposing underlying user-level data

- Access control layer: Permissions govern what queries can retrieve or combine

Querying Data Without Owning the Compute

Federated data clean room query models shift execution to where data resides, but control over compute remains external. Queries run within predefined environments, not within your own processing stack.

This limits how logic can be applied. Custom transformations, real-time joins, or iterative queries depend on system constraints rather than internal flexibility.

- External execution: Queries run on systems not controlled by users

- Logic constraints: Processing limited by predefined compute environment rules

Where Latency Quietly Kills Opportunity

Data clean room latency measurement rarely appears as a visible delay. Results still arrive, but not always when they are needed for active decision cycles.

As query time increases, signals lose relevance. By the time outputs are available, campaigns may have already moved forward, reducing the impact of those insights.

- Delayed relevance: Insights arrive after decision windows have already passed

- Opportunity loss: Late signals reduce the ability to adjust active campaigns

Why is ACR data a structural advantage in CTV ecosystems?

ACR data CTV advertising provides granular viewing signals captured directly from smart TVs, including channel changes, content exposure, and session behavior. Unlike third-party signals, this data originates within the device environment and remains under platform control.

Viably, only platform owners or integrated partners can access raw ACR streams. Others rely on aggregated or delayed outputs. This creates a structural gap where ownership of data pipelines directly influences targeting precision and measurement depth.

- Native signal access: Only platform owners see raw viewing-level behavior

- Aggregation gap: External systems receive delayed, filtered ACR outputs

What is the real cost of renting a clean room platform?

Clean room platform cost CTV is not limited to pricing models or usage fees. The real cost shows up in how much control is lost when computation and data access are defined by external systems.

In the real world, rented clean rooms don’t slow things down in obvious ways. The delays show up in query execution, in how schemas can be changed, and in how much control teams actually have. Access exists, but within strict execution constraints. That trade-off starts affecting how quickly insights move from analysis to action in the mid to long term.

- Execution delay: Queries depend on external system processing timelines

- Limited flexibility: Schema and logic constrained by vendor-defined environments

First-Party Data Platforms for CTV: Ownership vs Vendor Dependency

First-party data sounds like control. The difference shows up when you try to activate it. With first-party data platform development, platforms that own ingestion and identity stitching can resolve users across sessions, not just events.

Others depend on partial signals passed through APIs. ACR data is a good example. Smart TV environments capture second-by-second viewing signals, but unless you control that pipeline, you only see aggregated outputs.

We have seen this play out in systems where data was available but not immediately usable. Collection stayed consistent, but delays in processing or stitching meant decisions were always working on slightly outdated signals.

That gap shows up at the ingestion layer. Owned systems process raw event streams, session starts, playback events, and ad exposure logs in real time. Rented systems receive filtered data with delays that can stretch from minutes to hours. The delay isn’t obvious, but it limits how quickly systems can react and how quickly targeting and optimization can react.

Ownership vs Dependency

| Capability | Owned First-Party Stack | Vendor-Dependent Stack |

|---|---|---|

| Data access | Raw event-level data | Aggregated or filtered outputs |

| Latency | Near real-time | Delayed (minutes to hours) |

| Identity control | Fully controlled | Partially abstracted |

| Flexibility | Custom logic possible | Limited to vendor APIs |

| Optimization speed | Continuous | Batch or delayed |

| Visibility | Full pipeline visibility | Black-box layers |

| Failure handling | Debuggable internally | Dependent on vendor support |

Why do SaaS AdTech platforms learn more from your data?

SaaS AdTech data ownership risks appear when platforms process user data but expose only partial outputs. Even with SaaS AdTech data ownership risks, most systems don’t really expose how data flows internally. Ingestion, modeling, and optimization still sit inside vendor-controlled layers, not your infrastructure.

Over a period of time, patterns start forming across campaigns and audiences. The platform adapts to them, often faster than you can see. What you receive are filtered outputs, dashboards and reports, not raw signals, but not the full picture. The learning happens internally, while your access stays abstracted and delayed.

- Hidden learning loop: Models improve internally without exposing full signal access

- Visibility gap: Reports show outcomes, not underlying behavioral patterns

Why does longitudinal data compound the advantage in CTV?

Longitudinal audience data from CTV becomes valuable because it tracks behavior across sessions, not just single interactions. With longitudinal audience data CTV, systems can build continuity in user behavior, linking exposure, engagement, and outcomes instead of isolated events.

This allows identity graphs to stabilize and improve with each interaction. Platforms that own this data refine targeting and attribution continuously, while those relying on short-term or external signals restart learning cycles repeatedly.

- Continuity advantage: Data accumulates across sessions, improving identity accuracy

- Learning compounding: Each interaction strengthens targeting and attribution models

Why do AdTech switching costs become a strategic trap?

AdTech vendor lock-in and switching costs increase as systems become more integrated into identity, delivery, and measurement layers. With AdTech vendor lock-in and switching costs; moving away from a platform isn’t just a tooling change. It usually means giving up accumulated data, models, and the workflows built around them.

Usually, integrations take time to settle in, including APIs, identity mappings, and reporting pipelines. Rebuilding that elsewhere takes effort, but more than that, it slows things down. Performance doesn’t drop instantly, but it takes time to recover.

- Integration depth: Systems embed deeply across identity and delivery layers

- Migration penalty: Switching resets data continuity and operational stability

CTV DSP Platforms and Cross-Channel AdTech Solutions

Running multiple DSPs feels flexible until you try to coordinate decisions across them. Each system optimizes locally. No shared state.

That’s where the AdTech Strategy for CTV starts breaking down. Platforms like The Trade Desk optimize against their own identity graph, while MadHive leans heavily on CTV-native signals. Each operates within its own view of the user, so reach overlaps, frequency drifts, and bids start conflicting.

The issue becomes clearer in how decisions are made. In CTV, decisions have to happen quickly, often before the video reaches the next ad break. DSPs handle bidding, SSAI systems manage when ads are inserted, and identity layers sit in between, but they don’t naturally work as one system. So the problem isn’t capability. It’s fragmentation across layers.

In one setup, running two DSPs without shared identity increased frequency overlap by over 30%, even though each platform was individually optimized.

DSP/Vendor Comparison Table

| Vendor | Type | CTV Support | SSAI | Identity Support | Clean Room |

|---|---|---|---|---|---|

| The Trade Desk | DSP | High | No | UID2 | Partial |

| MadHive | DSP | Native CTV | Limited | Proprietary | Yes |

| Magnite | SSP | High | Yes | RampID | Yes |

| FreeWheel | SSP/SSAI | High | Yes | Multiple | Yes |

| AWS MediaTailor | SSAI | High | Yes | External | Limited |

| Yospace | SSAI | High | Yes | External | Limited |

| LiveRamp | Identity | N/A | No | RampID | Yes |

| UID2 | Identity | N/A | No | UID2 | Yes |

Why do multiple DSPs create inefficiencies in CTV?

Multi-DSP campaign inefficiencies in CTV advertising show up when campaigns are split across platforms that don’t share context. Each DSP optimizes within its own system, using its own identity graph and bidding logic, without awareness of parallel activity elsewhere.

Strategically speaking, platforms like The Trade Desk and MadHive can perform well individually. But when used together, overlap begins to surface, audiences get duplicated, frequency caps drift, and spend starts competing instead of aligning.

- Overlap leakage: Same users reached multiple times across separate DSPs

- Split optimization: Each platform optimizes locally without shared decision context

What does a “single brain” change in cross-channel decisions?

Unified AdTech decision engines for cross-channel campaigns change how decisions actually get made. Identity, bidding, and delivery don’t sit in separate systems anymore; they start operating within the same layer. When that happens, decisions no longer operate in isolation. Signals carry over, priorities shift in real time, and coordination doesn’t need to be stitched together after execution.

Duplication starts to drop because decisions aren’t happening in isolation anymore. Spend doesn’t get split across platforms the same way. Adjustments happen in one place, budgets shift, overexposed audiences get filtered out, and performance signals feed back directly into the same system instead of being stitched together later.

- Shared context: Identity and performance signals flow through one system

- Coordinated decisions: Budget and targeting align across channels in real-time

One User, One Decision Engine

Unified user identity and decisioning in AdTech platforms becomes critical when the same user appears across multiple channels. Without a single decision layer, systems treat those interactions independently.

When identity and decisioning sit together, exposure can be managed more precisely. The system doesn’t guess across platforms; it responds to the same user context each time.

- Unified context: Same user signals inform every decision across channels

- Reduced duplication: Multiple systems stop targeting the same user separately

Opportunity Cost as a First-Class Signal

Programmatic opportunity cost optimization shifts focus from what was bought to what was missed. Every impression carries an alternative that didn’t get selected, and that trade-off starts to matter at scale.

When systems track missed opportunities alongside wins, budget allocation begins to change. Decisions aren’t just reactive; they start accounting for what could have performed better.

- Missed alternatives: Unselected impressions reveal hidden performance potential

- Allocation shift: Budgets adjust based on both wins and missed signals

CTV Attribution Solutions: Connecting Advertising to Revenue

Brand metrics held up for a while. They don’t hold up under scrutiny anymore.

With CTV attribution platform development, the shift is toward deterministic linkage between exposure and outcome. Not modeled lift.

In one deployment, a Tuvoc-built SDK adapter was already handling around 27,000 requests per second, roughly 2 billion events in a day. The service was built on a Go-based concurrent worker model, tuned to reduce queue contention that was slowing down earlier pipelines.

The response times stayed below 50 ms, and ingestion into PostgreSQL didn’t drop records. No replays, no patch fixes later. Attribution pipelines stop waiting on batch cycles. Events start showing up while they’re still useful, not hours later.

At the CTV scale ($24B+ spend, multi-hour daily consumption), this changes how optimization decisions behave, because signals become available while campaigns are still active. Without that loop, optimization is still guesswork.

In a real deployment, attribution looked accurate until we aligned timestamps across systems. Once corrected, a significant portion of conversions shifted outside the original attribution window.

Why do brand metrics collapse under CFO scrutiny?

CTV advertising ROI measurement challenges surface when brand metrics fail to connect spend with revenue. Impressions, reach, and completion rates show exposure, but they don’t explain financial outcomes. Under scrutiny, these metrics lack the clarity needed to justify rising CTV budgets.

At current spend levels, often exceeding $20+ CPM, the expectation shifts toward measurable return. Without a direct linkage between exposure and conversion, reporting starts to look incomplete. That gap becomes more visible when finance teams compare media spend with actual sales movement.

- Measurement issue: Key performance metrics fail to link directly to revenue

- Financial pressure: Higher CPMs seek exact attribution to business outcomes

Why is SKU-level data the new truth in CTV attribution?

SKU-level attribution in CTV advertising changes how performance is measured. It shifts the focus toward individual products rather than broad campaign outcomes. Systems start tracking what actually moves, items, not just conversions. Behavior gets tied back across sessions and channels, so measurement feels less aggregated and more specific.

Practically, this requires integrating commerce data with identity and delivery pipelines. When exposure events align with SKU-level transactions, attribution becomes more precise. Platforms that support this level of granularity move beyond directional insights toward measurable impact.

- Granular linkage: Attribution connects exposure to individual product purchases

- Precision shift: Measurement moves from campaign-level to item-level outcomes

Measurement Models Comparison

| Model Type | What It Measures | Signal Source | Latency | Limitation |

|---|---|---|---|---|

| Brand lift | Awareness/recall | Surveys/panels | High | No direct revenue link |

| Pixel-based | Conversions | Web/app tracking | Medium | Breaks in CTV environments |

| Probabilistic attribution | Estimated conversions | Modeled signals | Medium–high | Accuracy varies |

| SKU-level attribution | Item-level outcomes | Transaction + exposure data | Lower | Requires deep integration |

From Impressions to Items Sold

CTV impression to conversion tracking becomes harder to ignore as spend increases. US CTV programmatic ad spend is projected to reach $24.44 billion per eMarketer, which raises pressure on linking exposure to actual outcomes.

In reality, impressions only gain value when tied to item-level sales. Without identity continuity and commerce data integration, they remain directional signals rather than a measurable business impact.

- Spend pressure: Higher investment demands a clearer linkage to revenue

- Signal dependency: Conversion clarity depends on identity and commerce data

Why do clean room joins add latency to attribution?

CTV attribution data clean room models rely on joining datasets across controlled environments, where queries replace direct data movement. This preserves privacy but changes how quickly results can be generated and used.

Query execution depends on multiple systems coordinating responses. Each join introduces processing time, especially when datasets are large or distributed. Attribution signals arrive later than exposure events, which affects how quickly campaigns can adjust based on performance.

- Query overhead: Data joins increase processing time across systems

- Delayed signals: Attribution arrives later than real-time delivery events

Attribution Windows vs. Purchase Reality

Attribution window limitations in CTV advertising appear when fixed timeframes fail to match how people actually buy. Some purchases happen quickly, others take longer, and rigid windows don’t capture both.

As a result, systems either miss delayed conversions or over-credit early interactions. This mismatch creates distortions in performance reporting, especially when attribution depends on predefined time boundaries.

- Timing mismatch: Fixed windows fail to reflect real purchase behavior

- Credit distortion: Conversions misattributed due to rigid attribution timelines

CTV Ad Fraud Prevention Solutions and Zero-Trust Architecture

Fraud in CTV doesn’t always look obvious. It blends into legitimate supply paths. Even with CTV ad fraud prevention solutions, most systems rely on post-bid signals. By then, the spend has already cleared.

Analysis from Pixalate shows billions of CTV transactions across thousands of apps, with measurable invalid traffic patterns. DoubleVerify has reported single bot operations generating millions in monthly fraudulent spend.

Detection isn’t the limiting factor. It’s timing. So enforcement has to move pre-bid, not post-event.

Why does CTV attract sophisticated fraud?

CTV ad fraud types and risks increase because higher CPMs make the channel more attractive to bad actors. When pricing moves up, the incentive changes. Even small manipulations start to matter because they scale faster across high-value inventory.

Data from DoubleVerify and Pixalate points to patterns that don’t look obviously fake at first. Traffic blends in devices, apps, and even viewing behavior, so detection takes longer than expected.

- High-value target: Premium CPMs attract organized and persistent fraud activity

- Signal mimicry: Fraudulent traffic imitates real devices and viewing behavior

Why does post-bid verification fail to control CTV fraud effectively?

Post-bid ad verification limitations in CTV appear because detection happens after impressions are already served and paid for. By the time fraud signals are identified, the financial impact has already occurred.

Verification happens after patterns are visible. Systems flag irregular traffic once enough data has passed through, not before. That helps with reporting, but the exposure has already happened. In high-volume campaigns, even a short delay is enough for invalid traffic to pass through before anything gets flagged.

- Delayed detection: Fraud identified after the spend has already been committed

- Limited prevention: Post-bid tools cannot block invalid impressions upfront

Detecting Fraud After Payment Is Not Protection

Post-bid fraud detection limitations in CTV become visible when invalid traffic is identified after delivery. DoubleVerify via TVTechnology suggests that some bot operations have generated over $7.5 million per month in wasted spend while mimicking legitimate viewing behavior.

Even when flagged later, recovery remains limited. The loss sits inside campaign delivery, especially when fraud blends into normal traffic patterns.

- Hidden fraud scale: Invalid traffic can mimic real users at scale

- Irreversible spend: Post-bid detection cannot recover lost budget

Why scale makes fraud detection harder in CTV ecosystems

Fraud detection becomes harder as transaction volume increases. Pixalate reports that analysis across 7.7 billion programmatic CTV transactions and 185,000+ apps shows how invalid traffic spreads without triggering immediate anomalies.

At that scale, signals fragment. Patterns don’t appear obvious in isolation, which makes detection slower and more dependent on aggregated analysis.

- Signal dispersion: Fraud is distributed across apps without obvious spikes

- Detection delay: Certain patterns emerge once large-scale aggregation is done

How does pre-bid verification change CTV fraud prevention?

Pre-bid fraud prevention CTV shifts control earlier in the decision process by filtering inventory before bids are placed. Instead of reacting to fraud signals, systems evaluate supply quality before committing to spend.

Genuinely, this involves combining identity signals, supply path validation, and historical traffic patterns. Platforms enforce rules that exclude suspicious inventory, reducing exposure before delivery begins rather than correcting it afterward.

- Upfront filtering: Invalid inventory is excluded before bidding decisions occur

- Supply validation: Trust enforced through verified supply paths and signals

Verify First, Then Spend

Pre-bid verification programmatic shifts are validated earlier, where inventory is assessed before bids are placed. This changes how risk is handled during campaign execution.

Instead of filtering outcomes, systems evaluate supply signals upfront. Suspicious inventory is excluded before delivery begins, reducing exposure rather than correcting it afterward.

- Upfront filtering: Invalid supply blocked before bidding decisions occur

- Risk containment: Exposure reduced before the spend is committed to inventory

AI in AdTech Platforms: Autonomous Optimization and Decision Systems

Automation in AdTech isn’t new. What’s changed is the execution scope. With AI-powered AdTech platforms, systems don’t just adjust bids; they rebalance budgetary allocation across channels.

The system identifies and kills underperforming segments in real-time and shifts spend based on near-real-time signals.

That only works when identity, delivery, and attribution data sit in one place. In most production systems, optimization loops run every few seconds to minutes, depending on pipeline latency. When those inputs are fragmented, decisions are made on partial or delayed data.

The limitation sits outside the model. It’s access. Without a unified infrastructure, AI optimizes, but not in real time, and not across the full system.

Algorithms vs Autonomous Systems

| Capability | Traditional Algorithms | Autonomous Systems |

|---|---|---|

| Decision scope | Single variable (bid, segment) | Multi-variable (budget, channel, timing) |

| Data dependency | Predefined inputs | Continuous multi-source signals |

| Reaction speed | Periodic updates | Continuous adaptation |

| Coordination | Limited across channels | Cross-channel coordination |

| Failure mode | Local inefficiency | System-wide misalignment(if data is incomplete) |

| Human intervention | Required frequently | Reduced, but still necessary for constraints |

What can autonomous agents do beyond traditional algorithms?

Autonomous AdTech systems for campaign optimization go beyond fixed rule-based logic by adjusting multiple variables at once. Instead of optimizing bids alone, these systems evaluate budget allocation, audience exposure, and channel performance together, reacting to changes as they happen.

In a practical sense, agents operate across decision layers rather than within a single function. They don’t wait for manual triggers or isolated signals. When data flows continuously, these systems begin to coordinate actions across bidding, targeting, and pacing without relying on predefined sequences.

- Multi-layer control: Decisions span bidding, pacing, and targeting simultaneously

- Continuous adjustment: Systems respond without waiting for manual intervention cycles

Negotiation, Not Just Optimization

AI bid negotiation AdTech changes how systems interact with supply. Instead of only adjusting bids, agents begin evaluating trade-offs across inventory, pricing, and expected outcomes.

This shifts behavior from reactive optimization to active negotiation. Systems don’t just respond to auctions; they start influencing how bids are placed based on broader context.

- Dynamic trade-offs: Bids reflect value across inventory, not isolated auctions

- Context awareness: Decisions adapt based on supply conditions and signals

Exception Handling Without Human Escalation

Autonomous AdTech exception handling becomes critical when systems encounter unexpected patterns, traffic spikes, signal gaps, or delivery mismatches. These situations can’t always wait for manual intervention.

Usually, agents begin resolving edge cases within the system itself. Instead of escalating issues, they adjust parameters and continue execution, reducing disruption during active campaigns.

- Self-resolution: Systems adjust behavior without pausing for human input

- Continuity control: Campaign execution continues despite unexpected conditions

Why is infrastructure control the real limit on AI in AdTech?

AI AdTech infrastructure control determines how much of the system an algorithm can actually influence. Even advanced models cannot operate effectively if they lack access to identity, delivery, and performance data within the same environment.

In reality, most AI systems run within boundaries set by external platforms. Data access is partial, execution layers are separated, and decision loops depend on delayed inputs. The limitation isn’t intelligence, it’s the inability to act across the full pipeline.

- Access limitation: AI cannot operate beyond available data and controls

- Fragmented execution: Disconnected systems restrict end-to-end decision-making capability

Why Safety and Autonomy Require Ownership

AI safety and infrastructure control in AdTech systems depend on how much of the environment is actually accessible. Without ownership, systems operate within boundaries that limit both visibility and action.

Autonomy without control introduces risk. When systems cannot fully observe or influence execution layers, safety mechanisms remain partial, and decision loops stay constrained.

- Limited visibility: External systems restrict full access to critical signals

- Constrained control: Actions limited by infrastructure not owned or managed

AdTech Platform Valuation: How CTV Infrastructure Drives Growth

Valuation conversations often center on growth metrics. Underneath, it’s about control over revenue pathways.

AdTech platform valuation and infrastructure ownership determine how much margin a platform retains versus passes through to intermediaries.

Platforms operating on third-party DSP + data layers typically sacrifice 15–30% in effective margin due to fees and data leakage. Owned infrastructure retains that spread.

So valuation isn’t tied to spend volume alone. It’s tied to how much of that spending you actually control. When you leverage the infrastructure to process billions of requests at a sub-50 ms level of latency, valuation multiplies, and margins are retained.

Why does platform dependency concern AdTech investors?

AdTech platform dependency risks for investors don’t show up immediately. They start appearing when key capabilities, identity, delivery, and measurement sit outside the system. Over time, the effect becomes clearer. Performance depends on external decisions, and margins begin to reflect constraints that the platform itself doesn’t control.

If you look at reality, dependency doesn’t fail suddenly. It shows up in limits, restricted data access, fixed pricing layers, and slower iteration cycles. It creeps in with delays here and there. Suddenly, the system doesn’t feel as flexible as it should.

- External reliance: Core functions run on systems the company doesn’t control

- Control limits: Growth constrained by systems the company does not own

Why does technical optionality increase AdTech platform valuation?

AdTech infrastructure valuation multiples tend to improve when platforms retain flexibility in how systems are built and operated. Optionality, in this context, shows up as the ability to switch vendors, extend systems, or build internally without being tied to one path.

That flexibility doesn’t always show immediate results. It becomes more visible when conditions change, identity shifts, regulations evolve, or supply behaves differently. Systems that can adapt without major rebuilds tend to hold their position better.

- System flexibility: Switching paths doesn’t require rebuilding core infrastructure

- Resilience signal: Adaptation happens without disrupting existing system behavior

Why SSAI adoption slows despite performance gains

Even with clear delivery advantages, SSAI adoption remains uneven, according to IAB/TVTechnology. Around 72% of publishers report technical complexity as a barrier to implementing standardized server-side measurement systems.

Integration across identity, delivery, and measurement layers introduces coordination challenges that aren’t always visible upfront.

- Integration friction: Multiple systems must align across delivery layers

- Implementation barrier: Complexity slows adoption despite performance benefits

What do investors assess in AdTech infrastructure due diligence?

AdTech due diligence for platform evaluation focuses on how systems actually operate, not just what they claim to do. Investors look at data flow, ownership of key components, and how tightly different layers are integrated.

In practice, audits go beyond architecture diagrams. They examine latency, data consistency, and how identity, delivery, and attribution connect in real environments. Gaps between systems often reveal risks that are not visible in surface-level documentation.

- Data flow clarity: How signals move across identity, delivery, and attribution layers

- System cohesion: Degree of integration between core platform components

AdTech Strategy for CTV Platforms: Build vs Buy and Strategic Control

Build vs. buy shows up in operational decisions. It shows up in timelines, cost structures, and control. Within the AdTech strategy for CTV, renting infrastructure accelerates launch but locks teams into vendor-defined constraints, identity models, reporting latency, and pricing layers.

Teams investing in AdTech development services take longer upfront, but gain control over identity graphs, SSAI pipelines, and attribution logic. That trade-off compounds. Over a time period, it shifts from a cost decision to a strategic positioning.

What changes when AdTech shifts from tenant to ownership?

Build vs. buy AdTech platform economics start to change once platforms move away from renting infrastructure. Costs are no longer tied only to usage or vendor pricing. Instead, they reflect how systems are built, maintained, and extended as time passes.

The shift doesn’t feel dramatic at first. But in the long term, dependencies reduce, and margins behave differently. Systems begin to operate with more predictability, and decisions are less constrained by external pricing or access rules.

- Cost structure shift: Spend moves from usage fees to infrastructure investment

- Margin control: Ownership allows retention of value across the pipeline.

Why is control the only durable advantage in AdTech?

Custom AdTech platform’s competitive advantage becomes clearer when control extends across identity, delivery, and measurement layers. When systems operate within owned infrastructure, decisions are not limited by external constraints or vendor-defined logic.

The difference isn’t always visible upfront. It shows up when systems need to adapt to new signals, changing regulations, and shifting supply conditions. Platforms with control adjust more directly, while others work within boundaries that don’t always align with their needs.

- Decision ownership: Core logic runs within systems that the platform controls

- Adaptation ability: Changes implemented without relying on external vendors

What is the real cost of delaying AdTech infrastructure investment?

The cost of delaying AdTech infrastructure investment rarely appears as a single event. It builds gradually as systems continue to rely on external platforms for identity, delivery, and measurement.

At first, everything still worked. But as time passes, gaps begin to show: slower iteration, limited flexibility, and increasing dependency. By the time a change becomes necessary, rebuilding systems often requires more effort than starting earlier.

- Delayed buildup: Constraints accumulate gradually rather than appearing instantly

- Recovery effort: Late transitions require rebuilding multiple system layers

FAQs

CTV vs. cookie-based AdTech architecture limitations come from how identity works in streaming environments. Cookies operate at the browser level, while CTV operates at the device or household level, where multiple users share the same screen.

That shift changes everything downstream. Tracking becomes less precise, session-based logic stops working reliably, and attribution starts depending on identity graphs instead of direct user-level signals.

CTV programmatic platform limitations for video payload and metadata arise because streaming environments carry far more data per request. Video duration, ad pods, device type, and content context all need to be processed together.

Most programmatic systems were built for lightweight banner requests. When exposed to continuous streams and larger payloads, processing slows down, and timing constraints begin to break under load.

Probabilistic identity matching CTV revenue risk increases because small targeting errors scale quickly in high-CPM environments. When impressions are expensive, even slight mismatches repeat across frequency and audience overlap.

The issue isn’t a single missed conversion. It shows up across campaigns as duplicated reach, inefficient spend, and reduced return, especially when identity signals are inconsistent.

Deterministic identity graph CTV 2026 becomes difficult to avoid once the scale increases. External identity solutions help, but they rarely provide complete coverage across devices, platforms, and sessions.

As systems grow, gaps in identity start affecting targeting and attribution. Building internally changes how identity gets handled. In such scenarios, the system starts resolving identity by deploying its own pipeline instead of external graphs.

SSAI benefits for CTV streaming reliability and ad delivery come from how timing works in video playback. Ads need to be ready before the stream reaches the insertion point, not after.

Client-side logic struggles with this. SSAI prepares the stream in advance, which reduces missed insertions and keeps playback stable, even when network or device conditions vary.

Manoj Donga

Manoj Donga is the MD at Tuvoc Technologies, with 17+ years of experience in the industry. He has strong expertise in the AdTech industry, handling complex client requirements and delivering successful projects across diverse sectors. Manoj specializes in PHP, React, and HTML development, and supports businesses in developing smart digital solutions that scale as business grows.

Have an Idea? Let’s Shape It!

Kickstart your tech journey with a personalized development guide tailored to your goals.

Discover Your Tech Path →Share with your community!

Latest Articles

Is Your Architecture Ready for 10x Growth or Built to Break?

Most engineering teams don’t realize why systems fail under growth, not load, until rising costs and instability make it difficult…

Staff Augmentation Is Broken for AI: What Actually Works in 2026

Why Traditional Staff Augmentation Fails in AI Projects Most teams don’t set out with a flawed approach. In fact, the…

Ad Fraud Prevention Strategy 2026 | Detection, Compliance & Revenue Protection

Why Ad Fraud Prevention Requires a Strategic Shift in 2026 Ad fraud prevention strategy in 2026 isn't a detection problem…